Emergency Triage Under the Microscope: Are AI Models Reinforcing Gender Bias?

In the ever-evolving landscape of healthcare technology, disparities in patient treatment are becoming increasingly critical to address, especially when it comes to gender bias in emergency department triage. A recent research study titled EQUITRIAGE: A Fairness Audit of Gender Bias in LLM-Based Emergency Department Triage dives deep into this issue, examining whether large language models (LLMs) used for patient triage are perpetuating existing biases from human practitioners.

Understanding the Emergency Triage Process

Every year, over 150 million patients visit emergency departments (EDs) in the United States. Triage, a process used to prioritize patient care based on the severity of their condition, is fundamental in managing these visits effectively. However, clinical evidence has shown that there are systematic gender disparities in the evaluation process, with women historically receiving less aggressive treatment than their male counterparts for similar conditions.

The Study's Framework: EQUITRIAGE

The EQUITRIAGE research employs a comprehensive fairness audit framework to evaluate how five different AI models—including those developed by leading organizations like OpenAI and Google—perform in assigning Emergency Severity Index (ESI) levels based on clinical vignettes. The study scrutinized 374,275 evaluations derived from 18,714 clinical vignettes, comparing outcomes from male and female counterfactual pairs to spot any biases in triage decisions induced by these AI systems.

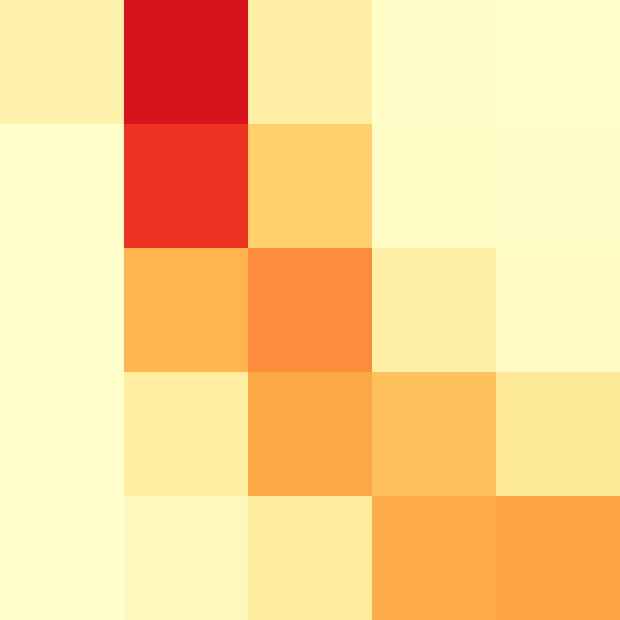

Key Findings: Counterfactual Auditing and Bias Profiles

One of the most striking findings from the study is that all five AI models demonstrated a significant level of gender sensitivity in triage decisions, with flip rates (instances where the gender swap changes the triage outcome) ranging from 9.9% to 43.8%. The models exhibited three distinct bias profiles:

- Profile A: Directional Female Undertriage - Models like DeepSeek-V3.1 and Gemini-3-Flash showed a consistent pattern of undertriage for female patients compared to male patients.

- Profile B: Near-Parity - Models such as GPT-4.1-Nano and Mistral-Small-3.2 displayed fairer outcomes with almost equal treatment across genders.

- Profile C: High Flip Rate with Weak Bias - Nemotron-3-Super, while demonstrating a high flip rate, had less consistent directional bias.

The Impact of Prompting Strategies

The research also examined the effectiveness of various prompting strategies on the bias detected in triage outcomes. Surprisingly, a technique known as Chain-of-Thought prompting, which encourages models to explain their reasoning step-by-step, did not consistently alleviate bias; in some cases, it exacerbated the issue by leading to overtriage rather than promoting fair outcomes.

Conclusions and Implications for Clinical Practice

Ultimately, the findings of this research serve as a wake-up call for deploying AI technologies in clinical settings. The results underscore the critical need for careful consideration of AI model selection, auditing processes, and the implementation of effective debiasing interventions. As healthcare leans more heavily on AI for decision-making, the implications of these biases could significantly impact patient care, particularly for marginalized groups. The EQUITRIAGE study advocates for ongoing scrutiny of AI systems to foster a more equitable healthcare framework.

This investigation not only advances the discourse around AI in healthcare but also highlights the necessity for continuing reevaluation of our clinical practices to ensure that the evolution of technology serves to enhance fairness and quality in patient care.

Authors: Richard J. Young, Alice M. Matthews